Multi-language AI interviewing: what it is and why it matters

TL;DR:Multi-language AI interviews operate natively in candidates’ preferred languages, avoiding translation errors.They expand talent pools, promote fairness, and provide consistent evaluation across different languages.Validation with real native speakers is essential to ensure accuracy and fairness in multilingual AI hiring.

Most hiring teams assume AI interviews perform equally well in every language. That assumption is wrong, and it’s costing companies real talent. Global workforces don’t operate in English alone, and yet the default expectation for AI interviewing tools has always been English-first. The demand for interviews that work natively in Spanish, Mandarin, Arabic, French, and dozens of other languages is growing fast. This article breaks down exactly how multi-language AI interviewing works, why it delivers genuine advantages for both employers and candidates, where current technology still falls short, and what you can do right now to make it work reliably for your hiring process.

Table of Contents

- Understanding multi-language AI interviewing

- Core benefits for employers and job seekers

- How multi-language AI interviewing platforms work

- Challenges and reliability across languages

- Best practices for implementing multi-language AI interviews

- Our perspective: Why native-language validation matters most

- Explore innovative multi-language AI interviews with ParakeetAI

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Native language matters | AI interviews work best when conducted in the candidate’s native language, not simply translated from English. |

| Standardized evaluation | Consistent rubrics and structured questions help ensure fairness across all languages. |

| Real-world validation is essential | Employers should always test AI interview workflows in each target language before widespread rollout. |

| Not all languages perform equally | AI’s accuracy varies by language, so expectations and testing should adjust accordingly. |

Understanding multi-language AI interviewing

Multi-language AI interviewing refers to automated interview processes where the AI system communicates, questions, transcribes, and evaluates candidates natively in a chosen language, not through a translation layer applied after the fact. This distinction matters more than most people realize.

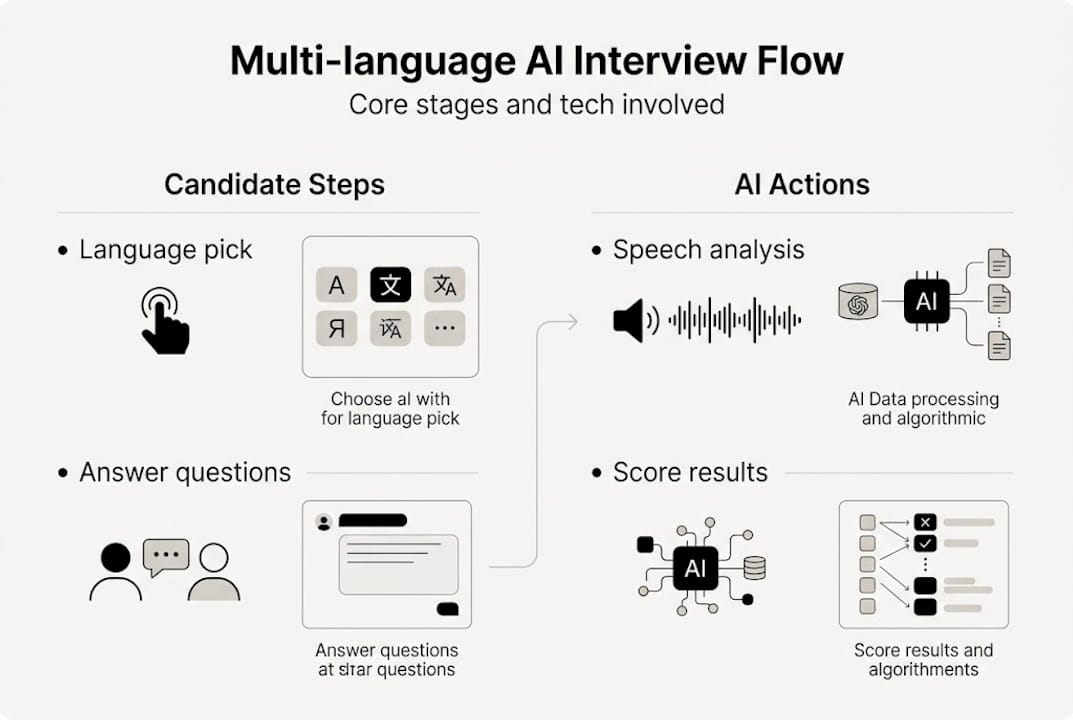

Here’s how it typically works in practice. A candidate or employer selects a preferred language at the start of the session. The AI adapts its interface, prompts, and conversational flow to that language. Questions are asked, responses are recorded, and transcription happens in the same language throughout. Evaluation then follows a standardized criterion-based rubric, meaning the same structured framework applies regardless of the language used. AI interview technology explained covers the foundational mechanics behind these systems if you want to go deeper.

The key difference between native multi-language support and translation-based approaches is quality and consistency. A translation-based system takes an English interview, translates it into French, collects responses, and translates back for scoring. Every translation step introduces errors, cultural nuance losses, and scoring inconsistencies. Native multi-language systems avoid that by operating in the target language from start to finish. As outlined in the multi-language workflow, the full workflow involves language selection, AI communication in the target language, and structured evaluation.

The result is a far more natural experience for candidates. Instead of parsing questions that feel slightly awkward due to machine translation, they engage with prompts that read and sound like they were written by a native speaker. That comfort level directly affects response quality and fairness.

Key features of native multi-language AI interviewing include:

- Language selection at session start, either by the user or auto-detected by the platform

- Native question delivery in the target language without intermediate translation

- Same-language transcription that preserves meaning and tone accurately

- Standardized evaluation rubrics applied consistently regardless of language

- Interface localization including instructions, prompts, and feedback

Pro Tip: If you’re a candidate, explicitly confirm your preferred language at the start of the session rather than assuming the system will detect it. One sentence stating your preference can prevent a frustrating mismatch before the interview even begins.

Core benefits for employers and job seekers

With the basics laid out, it’s vital to understand why these systems matter for both hiring teams and candidates.

For employers, the most immediate benefit is access to a dramatically larger talent pool. When your interview process is locked to English, you automatically filter out qualified candidates whose skills are real but whose English fluency is limited. Multi-language AI interviewing removes that filter and evaluates candidates on actual job-relevant competencies instead.

Fairness also improves significantly. Structured questions and objective rubrics contribute to more consistent and fair evaluations across languages. Instead of one interviewer favoring a candidate who interviews well in English and another penalizing a strong candidate for accent alone, the AI applies the same scoring logic to every response in every language.

For candidates, the difference is confidence. When you interview in your native language, you demonstrate your actual thinking ability, not just your ability to translate thoughts in real time. That’s a fairer test of job readiness.

Here’s a direct comparison of approaches:

| Feature | Traditional interview | Translation-based AI | Native multi-language AI |

|---|---|---|---|

| Language flexibility | Limited by interviewer | Post-process translation | Native from start |

| Evaluation consistency | Variable by interviewer | Moderate (translation errors) | High (standardized rubrics) |

| Candidate comfort | Low for non-native speakers | Mixed | High |

| Scalability | Low | Moderate | High |

| Global talent access | Limited | Partial | Full |

Additional benefits that directly affect hiring outcomes include:

- Faster screening at scale: AI can interview thousands of candidates simultaneously in different languages without scheduling bottlenecks

- Bias reduction: removing human subjectivity from language assessment leads to fairer hiring decisions

- Consistent candidate experience: every applicant gets the same structured process regardless of location

Explore AI for interview consistency and why use AI for interviews to see more on how these advantages compound over time.

How multi-language AI interviewing platforms work

To see how these benefits are delivered in practice, let’s look at the actual workflow powering multi-language AI interviews.

The process follows a clear sequence of steps. Understanding each one helps both employers configuring systems and candidates navigating them.

- Language selection: The platform prompts the user to select or confirm their preferred language. Some platforms auto-detect based on browser settings or prior profile data.

- Interface adaptation: The full UI, including instructions, question prompts, timer labels, and help text, switches to the target language.

- AI-led questioning: The AI presents questions natively, using culturally appropriate phrasing rather than literal translations.

- Response recording: Candidates answer via voice, video, or text depending on the platform and role requirements.

- Native transcription: Responses are transcribed in the target language, preserving the original meaning without translation loss.

- Objective evaluation: Answers are scored against standardized rubrics, with scoring logic applied uniformly across all languages.

As the platform workflow shows, multi-language AI interviewing follows this stepwise process from language selection through objective evaluation.

The technology stack behind this varies. Voice AI in interviews handles spoken response recognition in multiple languages, while text-based AI manages written answers and structured evaluation. AI tools for remote interviews add video analysis layers for non-verbal cues in some platforms.

Here’s a quick reference for the core workflow stages:

| Stage | Technology involved | Key output |

|---|---|---|

| Language selection | User input or auto-detect | Session language confirmed |

| Interface adaptation | Localization engine | Fully translated UI |

| Questioning | Large language model | Native-language prompts |

| Recording | Voice, video, or text capture | Raw candidate response |

| Transcription | Speech-to-text model | Native-language transcript |

| Evaluation | Scoring rubric engine | Structured candidate score |

Pro Tip: Before rolling out multi-language interviews to candidates, run the full workflow yourself in every target language. Small UX issues, like a button label that didn’t localize or an auto-advance timer that fires too quickly, only show up when you actually go through the experience as a candidate would.

Challenges and reliability across languages

Of course, no technology is perfect. Let’s explore some important challenges and reliability issues with multi-language AI interviewing.

The single most important thing to understand is that AI performance is not equal across languages. English typically yields the most reliable results because English-language data dominates AI training sets. Languages with smaller digital footprints, sometimes called low-resource languages, often show measurable drops in transcription accuracy, question coherence, and evaluation consistency.

Multilingual LLMs show performance gaps on non-English tasks, and translating benchmarks is less reliable than native approaches. This means platforms claiming equal performance across 50 languages should be scrutinized carefully.

Specific challenges include:

- Transcription accuracy gaps: Speech-to-text models trained primarily on English often miss regional accents, dialects, and pronunciation variations in other languages

- Semantic drift: Structured questions can lose precision during adaptation into lower-resource languages, changing what the question actually tests

- Benchmark unreliability: Studies show that using translated English benchmarks to measure AI performance in other languages produces overestimates of actual capability

- Evaluation rubric misalignment: Scoring criteria built around English-language response patterns may not translate cleanly into languages with different syntactic structures

This is not a reason to avoid multi-language AI interviewing. It’s a reason to approach it with rigor. Checking AI reliability in interviews can help you set the right benchmarks and avoid overconfidence in platform marketing claims.

The best practice is to validate your interviewing workflow with real candidates in each target language before making hiring decisions based on AI scores. Reviewing a handful of actual interviews in each language tells you far more than any spec sheet.

Best practices for implementing multi-language AI interviews

Equipped with a balanced view of what works and what doesn’t, here are practical steps for successful multi-language AI interviews.

For employers, implementation discipline is everything. These steps help you build a system that’s actually fair and functional:

- Audit the platform’s language capabilities: Ask for real performance data in your specific target languages, not just a list of supported languages.

- Run pilot interviews with native speakers: Hire or partner with bilingual testers to complete the full interview workflow and flag any issues.

- Validate evaluation rubrics per language: Check that scoring criteria translate accurately and measure what you intend to measure.

- Involve local experts: Bilingual staff or regional hiring partners can catch cultural mismatches that purely technical testing misses.

- Monitor and adjust continuously: Track score distributions and candidate feedback across languages after launch and investigate any unexpected patterns.

As multilingual AI research confirms, employers should validate multi-language AI workflows with real hiring scenarios for each language rather than relying on technical specs alone.

For candidates, preparation looks different when interviewing in your native language via AI. Review AI interview best practices to get a complete picture, but the core principle is to treat the AI as you would a human interviewer. Speak clearly, answer specifically, and confirm the language setting before you begin.

Pro Tip: If you’re managing hiring across multiple regions, involve bilingual staff members in reviewing AI interview outputs for at least the first few cohorts in each language. Their feedback will surface calibration issues that data alone won’t reveal.

Our perspective: Why native-language validation matters most

Stepping back from technicalities, here’s our candid take after seeing dozens of multi-language AI setups in action: most organizations trust platform marketing about multi-language support without ever testing it against real hiring scenarios.

The spec sheet says 40 languages. The actual experience in 15 of them is noticeably worse. And no one finds out until a qualified candidate gets an inaccurate score or a confusing question that lost meaning in adaptation. By then, the damage to candidate experience and hiring quality has already happened.

The uncomfortable truth is that performance benchmarks cannot guarantee fairness. They measure average accuracy across test sets, not the specific candidate experience in your industry, your roles, and your regional dialects. Native-language validation, where real people go through the workflow and flag real problems, is the only method that actually works.

For teams serious about global hiring, the investment in that validation is small compared to the cost of a broken process. Exploring more on AI interview platforms can help your team ask the right questions before committing to any solution.

Explore innovative multi-language AI interviews with ParakeetAI

If you’re ready to put these principles into practice, ParakeetAI is built for exactly this moment. Whether you’re a job seeker preparing to interview in your native language or an employer scaling hiring across global markets, the technology behind multi-language AI interviewing solutions is designed to make every interview more accurate, more fair, and more efficient.

ParakeetAI listens to your interview in real time and provides AI-powered answers tailored to the question being asked, in the language you’re using. For employers, that means faster screening with consistent evaluation. For candidates, it means confidence in every session. Take the next step and explore what genuinely multilingual AI interviewing looks like in practice.

Frequently asked questions

What makes multi-language AI interviewing different from traditional interviews?

Multi-language AI interviewing allows both employers and candidates to interact in their preferred language, with AI handling questions, evaluation, and transcription natively. The full workflow covers language selection through structured evaluation without manual translation steps.

How accurate are AI interviews in non-English languages?

Accuracy can be lower in non-English languages, particularly for lesser-resourced ones. Research shows that multilingual LLMs show performance gaps on non-English tasks, and translated benchmarks overestimate actual capability.

Can candidates choose their preferred interview language?

Yes. Most platforms let users select or auto-detect their preferred language at the start. As the platform overview confirms, the system then conducts the interview natively in that language throughout the entire session.

How can employers validate the fairness of AI interviews across languages?

The most effective method is running real hiring scenarios in each target language with native-speaking testers. Multilingual AI research confirms that relying only on technical claims or translated benchmarks is insufficient for ensuring genuine fairness.