AI accountability in interviews: fairness & transparency

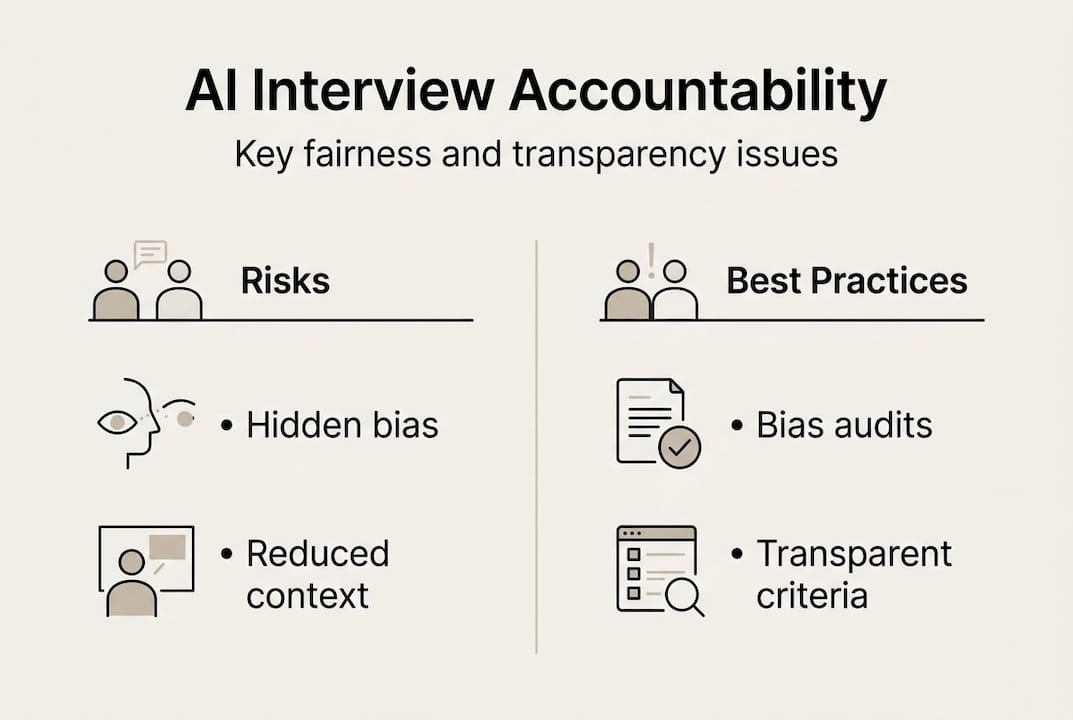

TL;DR:AI interview tools can favor early applicants, impacting fairness and candidate diversity.Responsible AI use requires fairness, transparency, explainability, and legal compliance.Employers must conduct bias audits and involve human oversight to ensure ethical hiring practices.

Imagine submitting a resume and learning an AI system ranked you poorly before a human ever read your name. That’s not a hypothetical. AI interview tools can favor the first resume reviewed by as much as 86%, meaning your position in a queue could matter more than your qualifications. Modern hiring increasingly relies on AI for resume screening, asynchronous video interviews, and automated scoring. Yet most job seekers and hiring managers don’t know how these tools work or who’s responsible when they go wrong. Understanding AI accountability isn’t just an academic exercise. It directly shapes who gets hired and who gets filtered out.

Table of Contents

- Defining AI accountability in interviews

- How AI is used in interviews and where accountability matters

- Best practices for ensuring AI interview accountability

- Contested debates and evolving regulations in AI accountability

- A practical perspective on accountability in AI-powered interviews

- Explore smarter, fairer interview tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI accountability defined | It ensures hiring technology is fair, transparent, and follows anti-discrimination standards. |

| Bias risks persist | AI can reduce but also introduce new hiring biases, so continuous monitoring is essential. |

| Best practices matter | Bias audits, human oversight, and clear structures foster trustworthy interview processes. |

| Both sides play a role | Job seekers and hiring managers should know their rights and responsibilities regarding AI in interviews. |

| Regulation is evolving | New laws and guidelines are emerging to improve AI accountability but gaps remain. |

Defining AI accountability in interviews

With the need for fairness clear, let’s define what AI accountability actually means in the interview context. AI accountability in recruitment is the set of practices, standards, and oversight mechanisms that ensure hiring technologies make decisions that are fair, explainable, and legally sound. It’s not just about avoiding lawsuits. It’s about building systems that treat every candidate as a person, not a data point.

At its core, responsible AI accountability covers six essential pillars: fairness, transparency, explainability, reliability, privacy, and compliance with anti-discrimination laws. These aren’t optional features. They’re the baseline for any organization that wants to use AI ethically in its hiring pipeline.

Here’s what each pillar means in practice:

- Fairness: AI decisions should not systematically disadvantage any group based on race, gender, age, or disability.

- Transparency: Candidates and hiring teams should know when AI is being used and what data it processes.

- Explainability: Decisions made by AI should be interpretable. “The algorithm said no” is not an acceptable reason to reject a candidate.

- Reliability: AI tools should perform consistently across different candidate pools and over time.

- Privacy: Personal data collected during AI-driven interviews must be handled according to applicable privacy laws.

- Compliance: Tools must align with regulations like the Equal Employment Opportunity Commission (EEOC) guidelines and other anti-discrimination frameworks.

“Accountability in AI hiring is not a single checkbox. It is an ongoing commitment to transparency, fairness, and meaningful human oversight at every stage of the process.”

For hiring managers, accountability means choosing tools that can be audited and explained. For job seekers, it means having the right to understand how your data was used and to challenge decisions that seem unfair. AI interview compliance is increasingly seen as a legal and ethical requirement, not a competitive differentiator.

When accountability breaks down, the consequences are real. Candidates from marginalized groups face compounded disadvantages. Employers face regulatory risk. And organizations miss out on talent that doesn’t fit a flawed algorithm’s idea of a “good candidate.”

How AI is used in interviews and where accountability matters

Now that you know the core principles, let’s see how AI is actually being used in today’s interview processes and where accountability becomes essential.

AI shows up in hiring in four major ways: resume screening, asynchronous video interviews, video assessment scoring, and fully automated AI interviewers. Each carries its own accountability risks.

Resume screening tools parse keywords and rank applicants before a human ever reviews them. Asynchronous interviews ask candidates to record answers to preset questions, which AI then scores on tone, phrasing, and even facial expression. Video assessment tools analyze body language and speech patterns. AI interviewers conduct full conversations without any human present.

Here’s where the bias enters. AI interviewers show positional bias, favoring the first resume 63 to 86% of the time when evaluating identical candidates. That single flaw can eliminate a qualified person purely because of when their file was uploaded.

| AI Tool | Primary Use | Key Accountability Risk |

|---|---|---|

| Resume screener | Filter applicants by keywords | Positional bias, keyword mismatch |

| Async video interview | Record and score responses | Drop-off rates, gender disparity |

| Video assessment AI | Analyze tone and expression | Intersectional bias, calibration drift |

| AI interviewer | Conduct full interviews | Lack of explainability, fraud detection errors |

The numbers are striking. AI pipelines improve success rates by 20% in some studies, but async interview formats reduce candidate continuation by 50%, with women disproportionately affected. So the same tool that speeds up hiring can quietly narrow your talent pool.

Pro Tip: If you’re a job seeker entering an async AI interview, treat it like a structured presentation. Record in a neutral environment, speak clearly, and stay on topic. AI scoring systems often penalize ambient noise and off-topic responses more than human reviewers would.

For employers, AI job interview ethics demand regular review of which tools are used and what they’re actually measuring. For candidates, understanding AI assistants in hiring helps you navigate these systems with more confidence.

Best practices for ensuring AI interview accountability

Understanding the risks, let’s break down the leading practices and what you can do whether you’re a recruiter or a candidate.

For employers, the gold standard starts with bias audits. Tools like AIF360 and Fairlearn are open-source frameworks that measure demographic disparities in AI outputs. Running regular audits before and during deployment can catch skewed results before they affect real candidates.

Here are the core steps every hiring organization should take:

- Conduct pre-deployment bias testing across race, gender, age, and disability status.

- Apply adverse impact analysis to ensure no group is filtered out at a disproportionate rate.

- Use structured scoring rubrics so AI evaluations map to defined, job-relevant criteria.

- Establish an AI governance council to oversee tool selection, audits, and policy updates.

- Choose task-specific AI over general-purpose models to reduce unpredictable outputs.

- Continuously monitor for drift, which is the gradual shift in AI behavior over time as data patterns change.

| Best practice | For hiring managers | For job seekers |

|---|---|---|

| Transparency | Disclose AI use in job postings | Ask what AI tools are being used |

| Bias auditing | Run AIF360/Fairlearn before deployment | Request demographic fairness data |

| Human oversight | Require human review for final decisions | Ask for human review if concerned |

| Structured criteria | Use rubric-based scoring | Prepare for competency-based formats |

| Recourse | Offer appeal or explanation process | Know your right to request explanation |

Pro Tip: If you’re a hiring manager, don’t treat an AI vendor’s “bias-free” claim at face value. Ask for third-party audit reports and test outputs yourself with synthetic candidate profiles before going live.

For job seekers, the AI interview fairness guide approach is straightforward: disclose any AI tools you use to prepare, request a human reviewer if you feel an automated decision was unfair, and practice structured, skills-focused responses. Understanding bias in AI interviews helps you recognize when a process may not be working in your favor. And knowing the role of AI for fairness in modern hiring gives you a stronger foundation to advocate for yourself.

Contested debates and evolving regulations in AI accountability

No guide is complete without considering where the experts disagree and how the regulatory landscape is changing.

Optimists argue that AI reduces the inconsistency of human interviewers who get tired, distracted, or subconsciously influenced by a candidate’s appearance. When structured and audited, AI can create more standardized evaluation, especially useful for high-volume hiring. Advocates point to studies where AI outperformed human raters at predicting job success.

Critics counter that standardization isn’t the same as fairness. Audited systems can still embed historical inequities into their training data, creating what some researchers call “bias washing,” where companies appear compliant without addressing root causes. Regulatory frameworks haven’t caught up to the pace of AI adoption, leaving significant gaps.

Technical methods reduce some bias but are not a remedy for deeper justice and regulatory challenges, particularly for marginalized applicants who face compounded disadvantages when AI errors intersect with existing systemic barriers.

Here’s where the regulatory picture stands in 2026:

- EEOC guidance addresses AI use in employment and requires that tools don’t create disparate impact on protected groups.

- NIST AI Risk Management Framework provides voluntary guidelines that many organizations use as a benchmark.

- State-level laws, like New York City’s Local Law 144, require bias audits before deploying automated employment decision tools.

- EU AI Act classifies AI hiring tools as high-risk systems, demanding conformity assessments and transparency obligations.

“Regulation without enforcement is just aspiration. The real test of AI accountability is whether candidates who are harmed have meaningful recourse.”

The debate about why automate job interviews at all is legitimate. Speed and scale matter to employers, but not at the expense of candidate dignity. Understanding AI interview compliance means knowing both what’s legally required today and what’s ethically expected tomorrow.

A practical perspective on accountability in AI-powered interviews

With contested viewpoints laid out, here’s a candid assessment of what really matters in practice.

True accountability in AI-driven interviews can’t be reduced to running an annual audit or ticking a regulatory box. What actually moves the needle is persistent transparency, inclusive design from the start, and the courage to override a flawed system when it matters. Most organizations get accountability wrong because they treat it as a compliance exercise rather than a design principle.

Here’s the uncomfortable reality: AI interview ethics demand that both employers and candidates stop deferring to technology as if it were neutral. Algorithms are built by humans, trained on human decisions, and they inherit human blind spots. The best hiring managers we see are those who use AI as a filter, not a gatekeeper, and who build clear appeal processes for candidates who feel a decision was wrong.

For job seekers, this means demanding clarity. Ask what system evaluated you. Ask what criteria it used. The organizations worth working for will have answers. The ones that can’t explain their AI tools probably aren’t using them responsibly.

Explore smarter, fairer interview tools

If you’re ready to experience ethically designed interview AI, here’s where to start.

ParakeetAI is built with accountability at its foundation. Whether you’re a job seeker preparing for a real-time AI-assisted interview or a hiring team looking for transparent, compliant automation, ParakeetAI delivers answers and insights you can actually explain. It listens to your interview live and surfaces responses that are grounded in your experience and the role’s requirements.

For candidates, this means walking into any structured interview with confidence, knowing exactly how your preparation aligns with what AI systems are designed to evaluate. Explore the ParakeetAI interview platform to see how responsible, real-time AI assistance works in practice. Accountability starts with the tools you choose.

Frequently asked questions

What is AI accountability in interviews?

AI accountability in interviews means AI hiring tools are designed and monitored to ensure fairness, transparency, and compliance with anti-discrimination laws. It covers explainability, reliability, and privacy protections for every candidate.

How do employers check AI interview fairness?

Employers use bias audits and fairness metrics alongside human oversight to verify that AI tools don’t produce discriminatory outcomes across demographic groups.

What should job seekers do if they face an AI interview?

Job seekers should ask about AI use upfront, request a human review if concerned, and prepare for structured assessments that focus on demonstrable skills rather than subjective impressions.

Are there laws requiring AI accountability in interviews?

Yes. EEOC guidelines and NIST frameworks set standards for AI in hiring, and state laws like NYC Local Law 144 require mandatory bias audits before deploying automated decision tools.

Is AI better than humans at interview decisions?

AI can predict employment outcomes better in some controlled studies and reduce certain human biases, but it also introduces new fairness risks that require ongoing monitoring and human judgment to manage.