How interview voice analytics can boost your success

TL;DR:AI voice analytics measures speech patterns, tone, and emotional cues during interviews.Preparing with practice tools and controlling tone can improve interview performance.Ethical concerns include potential bias against non-native speakers and the importance of transparency.

Job interviews have always been high-stakes, but a quiet revolution is reshaping how employers assess candidates. AI-driven voice analytics is moving from corporate pilot programs into mainstream hiring workflows at a remarkable pace, with the speech analytics market expanding rapidly across recruitment applications. Yet most job seekers still walk into interviews with no idea that their tone, pace, and pauses are being measured just as carefully as their words. Understanding what this technology actually does and how to use it to your advantage can be the difference between landing the offer and wondering what went wrong.

Table of Contents

- What is interview voice analytics?

- How interview voice analytics works in interviews

- Why employers and job seekers use interview voice analytics

- How to prepare for interviews that use voice analytics

- Why interview voice analytics is only as fair as its design

- Take your interview readiness to the next level with Parakeet AI

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Voice analytics explained | AI analyzes how you speak, not just what you say, to help assess your interview performance. |

| Practice matters | Using practice tools that mimic employer analytics can help you stand out in interviews. |

| Fairness has limits | These tools promise objectivity but can still carry biases if not properly audited for fairness. |

| Preparation is key | Knowing how voice analytics evaluates speech empowers you to better prepare and succeed. |

What is interview voice analytics?

Interview voice analytics is the use of artificial intelligence to analyze spoken communication during a job interview. The technology records your speech, then processes it through machine learning models trained to identify patterns in how you talk, not just what you say. Think of it as a layer of assessment that runs in parallel with the human recruiter’s judgment.

The core elements being measured go well beyond vocabulary. AI models examine your speech rate (how fast or slowly you talk), pitch variation (whether your voice sounds flat or engaged), pause frequency (how often you hesitate and for how long), sentiment (whether your word choices lean positive or negative), and coherence (whether your answers flow logically). Some platforms even analyze emotional cues, flagging whether you sound confident, anxious, or evasive. The role of voice AI in interviews has grown from a niche experiment into a measurable factor in hiring decisions.

A common myth is that voice analytics is purely a glorified keyword detector. That’s not accurate. Modern systems do track relevant terminology, but the more sophisticated analysis focuses on delivery patterns because research consistently shows that communication style predicts workplace effectiveness at least as much as raw content. Another misconception is that only large tech companies use this. In reality, the speech analytics market is growing across industries including finance, healthcare, retail, and logistics.

Here’s a clear breakdown of what gets evaluated and how it differs from a traditional interview:

| Voice analytics elements | Traditional interview assessment |

|---|---|

| Speech rate and pacing | General impression of fluency |

| Pitch and tonal variation | Perceived enthusiasm (subjective) |

| Pause length and frequency | Gut feeling about confidence |

| Sentiment and word choice | Interviewer’s personal interpretation |

| Emotional tone detection | Body language and facial cues |

| Coherence and logical flow | Structured answer quality |

| Filler word frequency | Noted informally, rarely scored |

Key traits voice analytics is designed to detect include:

- Filler word overuse: “um,” “uh,” and “like” are counted and can flag low confidence

- Monotone delivery: Little pitch variation often reads as disengagement

- Rushed pacing: Speaking too quickly can signal anxiety or poor preparation

- Extended pauses: Particularly at the start of answers, these can be scored as hesitation

- Negative sentiment clustering: Repeated negative framing of experiences raises flags

Understanding these elements before you walk in is a real competitive advantage.

How interview voice analytics works in interviews

With the basics defined, it is important to understand exactly how voice analytics operates during your interview. The process typically follows a clear sequence, and knowing each step helps you see exactly where your voice data goes and what decisions it influences.

- Recording begins. Either through a video interview platform (like HireVue or Spark Hire) or a dedicated audio capture tool, your responses are recorded in real time or stored for asynchronous review.

- Pre-processing filters the audio. The system removes background noise, separates your voice from ambient sound, and segments your responses by question.

- Feature extraction runs. The AI pulls out measurable features: fundamental frequency (pitch), amplitude variation, speaking rate in words per minute, pause markers, and linguistic sentiment signals.

- Comparison against benchmarks. Your metrics are compared to a model built from previous data, which might represent high performers in the role or industry-specific communication norms.

- A score or report is generated. Recruiters receive a dashboard showing how you ranked across communication dimensions, sometimes alongside a transcript and highlighted problem areas.

- Human review follows. Most reputable employers use these scores to supplement, not replace, human judgment. The AI flags patterns; the recruiter decides what those patterns mean in context.

Platforms such as Mappa and Aecho are among the AI interviewing tools that promise greater recruiter objectivity through this kind of systematic analysis. However, legal experts note that without regular auditing, these tools can introduce new forms of bias rather than eliminating old ones. Your data is typically anonymized for aggregate reporting, but individual candidate records are usually retained for a defined period under the employer’s privacy policy.

Pro Tip: Before your interview, check whether the platform used is one that applies analytics. Platforms like HireVue publish transparency reports. Search “[platform name] voice analytics” or “AI assessment methodology” to find published documentation before your session.

AI accountability in interviews is a growing topic precisely because candidates rarely know when their voice is being scored. Knowing this in advance gives you a chance to prepare deliberately rather than react blindly.

Why employers and job seekers use interview voice analytics

Understanding employer motivations helps reveal why these tools also matter for job seekers. The reasons on both sides of the hiring table are distinct but surprisingly complementary.

Employer motivations:

- Consistency across candidates. Human interviewers vary wildly in how they assess tone or confidence. Voice analytics applies the same measurement criteria to every candidate, reducing variability caused by interviewer mood or personal preferences.

- Unconscious bias reduction. When recruiters rely on gut instinct, they often favor candidates who remind them of themselves. Automated scoring on communication metrics theoretically removes some of that personal filter.

- Efficiency at scale. A company hiring for hundreds of positions can screen voice analytics reports much faster than watching dozens of hours of interview recordings.

- Data-driven hiring. Analytics create a paper trail that supports fair hiring practices and can be used to refine job profiles over time.

Job seeker motivations:

- Self-awareness before the real interview. Practicing with analytics tools reveals blind spots you cannot hear yourself: the pace you default to under pressure, the fillers you repeat without realizing it, the drop in energy toward the end of long answers.

- Targeted improvement. Instead of vague advice like “speak more confidently,” analytics give you precise feedback: your pace averaged 185 words per minute in the first answer and dropped to 150 in the third. That specificity is actionable.

- Leveling the playing field. Candidates who understand how AI for interview fairness works can prepare strategically, giving them an edge over candidates who assume only content matters.

| Who uses it | Primary benefit | Key concern |

|---|---|---|

| Employers | Objective, consistent screening | Audit and legal compliance risk |

| Recruiters | Faster candidate shortlisting | Over-reliance on automated scores |

| Job seekers | Practice and self-improvement | Not knowing when it is being used |

| HR teams | Data-driven hiring decisions | Privacy and data retention |

The market growth is hard to ignore. The rapid expansion of speech analytics in recruitment reflects just how broadly employers across sectors are adopting these tools. This means that regardless of industry, you are increasingly likely to encounter some form of voice assessment. Legal and AI for interview consistency experts consistently recommend that employers disclose when these tools are in use, though disclosure practices still vary.

From a practical standpoint, job seekers who wait to “deal with it” when they encounter analytics are at a serious disadvantage compared to those who build preparation into their practice routine right now.

How to prepare for interviews that use voice analytics

Armed with knowledge about the advantages, here is how you can actively prepare for interviews using these insights. The goal is not to sound robotic or perform for a machine. The goal is to communicate clearly, confidently, and authentically, which happens to score well on every dimension these systems measure.

Five practical preparation strategies:

- Practice tone control deliberately. Record yourself answering common interview questions, then listen back specifically for energy level changes. A good benchmark: your voice should maintain consistent engagement from the first sentence to the last. Many candidates trail off in energy during longer answers, which reads as disinterest on analytics platforms.

- Slow down your baseline pace. Most people speak faster under stress. The target range for professional speech clarity is roughly 130 to 150 words per minute. If you naturally run at 180 or above, slow down by 20 percent in practice until it feels natural.

- Simulate real analytics conditions. You do not need to wait for a real interview to get data. Job seekers gain an advantage by practicing with tools that provide similar feedback on tone and language patterns before the actual evaluation.

- Eliminate filler words with structured pauses. Instead of filling silence with “um” or “uh,” practice pausing deliberately for one to two seconds before answering. This signals confidence rather than uncertainty, and it gives analytics models fewer negative markers to flag.

- Get external feedback on coherence. Ask a friend or mentor to listen to a full mock interview and specifically note whether your answers follow a clear structure. Incoherent rambling scores poorly even if your tone is excellent.

Common mistakes that AI models flag include starting answers with “So, um, basically,” speaking in monotone during technical explanations, using heavily negative framing when discussing past challenges, and running answers so long that sentiment data gets diluted.

Pro Tip: Set up a 30-minute practice session using a video platform that lets you review playback. Watch once with the sound off to check body language, then listen once with your eyes closed to focus purely on vocal qualities. This split-focus technique surfaces issues that standard review misses.

Resources for continued preparation include exploring preparing for video interviews and discovering how to leverage AI for interviews to build a systematic practice routine that mirrors what employers actually evaluate.

Why interview voice analytics is only as fair as its design

There is a version of the voice analytics conversation that presents this technology as a purely objective solution to human bias. That version is incomplete, and you deserve to know why.

Voice analytics models are trained on historical data. If that data over-represents a particular demographic, communication style, or accent, the model learns to reward what it has seen most often. A candidate with a non-native accent, a stutter, or an atypical speech rhythm can be systematically scored lower for reasons that have nothing to do with their actual competence. Legal experts warn that compliance risks are significant, particularly around accent and disability-related bias, and that regular third-party audits are essential for these tools to be genuinely fair.

The promise of objectivity is real but conditional. It requires ongoing auditing, diverse training data, and transparent reporting. Most employers deploying these tools are not doing all three consistently.

What can you do with this knowledge? First, if you believe an AI assessment scored you unfairly, ask the employer about their review and appeals process. Many jurisdictions are moving toward requiring this. Second, seek feedback from human recruiters wherever possible to cross-reference machine assessments. Third, review AI interview bias challenges to understand your rights and the emerging legal landscape around AI-driven hiring tools. Understanding the system’s limitations does not mean you cannot use it to your advantage. It means you go in with clear eyes.

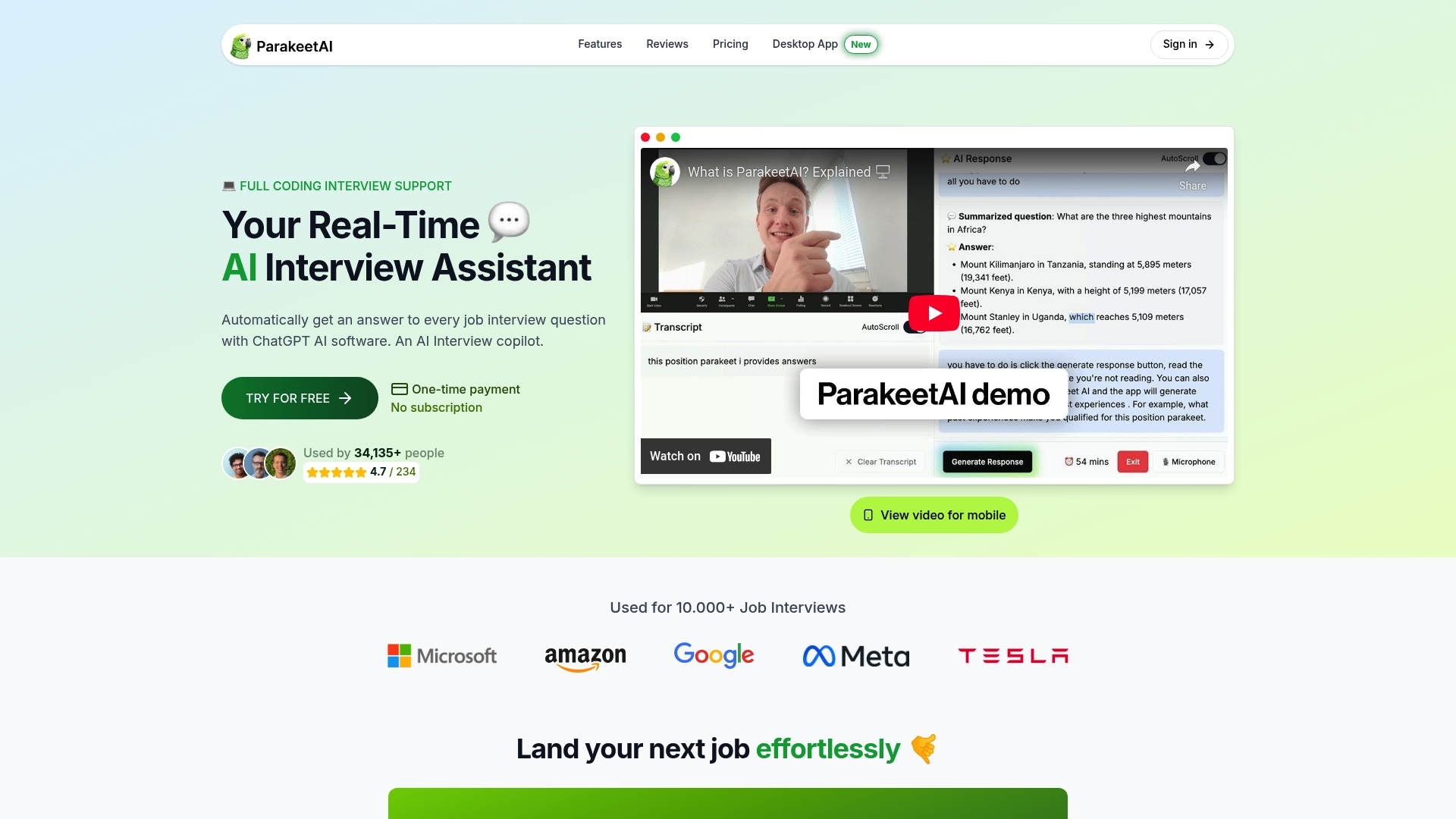

Take your interview readiness to the next level with Parakeet AI

Having explored both the power and the limitations of voice analytics, here is a step you can take right now.

Parakeet AI is a real-time AI interview assistant that listens to your interview as it happens and automatically provides answers to every question using AI. Whether you are preparing for a voice-analyzed screening or a live panel interview, Parakeet AI gives you the kind of in-the-moment support that turns preparation into performance. You get real-time response suggestions, tone-aware guidance, and the confidence of knowing you are never alone in the room. Visit parakeet-ai.com to see how it works and start practicing in a safe, feedback-rich environment built specifically for job seekers who want to win their next interview.

Frequently asked questions

What does interview voice analytics evaluate?

It evaluates your speech patterns, tone, pacing, and emotional cues to give employers or practice tools insights into your communication. Specifically, voice analytics reviews pitch, pace, and tone alongside language sentiment to generate a structured assessment.

Can interview voice analytics introduce bias?

Yes, legal experts warn these tools can risk bias against accents, disabilities, or unique communication styles if not properly audited. Candidates should ask employers about their audit process if they have concerns.

How can I prepare for an interview using voice analytics?

Simulate interviews with AI tools that provide feedback on your tone and language, and practice clear, confident speaking. Job seekers gain advantage by practicing with similar tools before their actual evaluation.

Are my interview answers private if analyzed by AI?

Most reputable tools anonymize your data, but it is important to check privacy policies for specifics. Privacy considerations and data retention vary significantly between platforms and jurisdictions.