How interview answer detection works: AI strategies and real facts

TL;DR:AI-powered interview answer detection now operates with 94–97% accuracy, analyzing responses for authenticity and consistency.Candidates can improve their chances by providing genuine reasoning, specific examples, and embracing authentic imperfections.

AI-powered interview answer detection isn’t experimental anymore. It’s running quietly in the background of thousands of hiring pipelines right now, scoring your responses, flagging inconsistencies, and sending reports to recruiters before you’ve even closed your laptop. Many job seekers assume these systems are unreliable or easy to fool, but the data tells a different story. Modern AI detectors reach 94–97% accuracy on standard questions. This article breaks down exactly how these systems work, where their limits are, and what you can do to walk into any AI-monitored interview with genuine confidence.

Table of Contents

- What is interview answer detection?

- How do AI tools detect and score your interview answers?

- Leading platforms: Benchmarks and performance compared

- Ways to approach interviews in the age of AI detection

- What most job seekers get wrong about AI answer detection

- Enhance your interview readiness with Parakeet AI

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI tools are highly accurate | Top detectors like Polygraf claim 98-99% accuracy, but real performance varies by context. |

| Reasoning is key | Demonstrating your unique thought process is the best way to stand out against AI detection. |

| Benchmarks have limits | Many public AI benchmarks don’t capture all interview settings, so results should be viewed with caution. |

| Strategy beats memorization | Responding with real examples and decisions will serve you better than rote answers. |

What is interview answer detection?

Interview answer detection is the use of AI algorithms to evaluate whether a candidate’s responses are authentic, original, and consistent with real human reasoning. The technology started as an anti-cheating measure for remote assessments, but it has grown into something broader. Today, companies use it to measure communication quality, cultural fit signals, and even job-specific problem-solving ability.

The core idea is straightforward: an AI system analyzes the structure, vocabulary, and reasoning patterns in your answers and compares them against large datasets of both human-written and AI-generated text. If your response resembles patterns commonly found in generated content, or if it lacks the small imperfections and reasoning detours typical of genuine human thought, the tool flags it.

Here’s what most of these systems are actually looking for:

- Linguistic naturalness: Real answers have false starts, self-corrections, and varied sentence lengths. Generated answers tend to be unnaturally smooth.

- Reasoning depth: Authentic responses include trade-offs, doubts, and personal context. Generic answers often skip directly to conclusions.

- Consistency over time: AI tools compare your early answers to your later ones. Sudden shifts in vocabulary or complexity raise flags.

- Contextual specificity: Vague answers that could apply to any job or company score lower on authenticity metrics.

These tools also play an increasingly significant role in pre-employment testing, where written assessments and async video responses are evaluated automatically before a human recruiter ever looks at your file. General AI text detectors now achieve 94–97% accuracy in controlled benchmarks, which means this is a serious layer of scrutiny, not a checkbox.

How do AI tools detect and score your interview answers?

The mechanics behind these systems are more sophisticated than most candidates realize. Here is a step-by-step breakdown of what happens from the moment you submit or speak an answer.

- Answer collection. Your response is captured as text (typed) or audio (spoken). Some platforms combine both channels, analyzing what you say and how you say it simultaneously.

- Transcription. For spoken answers, the audio is converted to text. High-end tools like Polygraf report 99.9% transcription accuracy, which means very few words are lost or misread before analysis begins.

- Feature extraction. The AI pulls linguistic signals from your text: sentence complexity, vocabulary range, transition words, hedging language, and more. It also tracks logical flow, looking for cause-and-effect reasoning or isolated factual statements.

- Pattern comparison. Your extracted features are matched against training data containing both authentic human answers and AI-generated ones. The model calculates a probability score for each response.

- Originality and consistency scoring. The system checks your answers against each other and against your application materials. Inconsistent vocabulary or sudden complexity spikes are treated as anomalies.

- Final report generation. A combined score is produced and sent to the recruiter, sometimes with flagged sections highlighted for manual review.

The table below shows how different types of questions affect AI scoring performance.

| Question type | AI scoring reliability | Key challenge |

|---|---|---|

| Behavioral (STAR format) | High | Easy to detect over-rehearsed patterns |

| Technical knowledge | High | Checks for depth vs. surface recall |

| Situational judgment | Medium | Requires understanding of context nuance |

| Open-ended creativity | Medium | Harder to define “authentic” baseline |

| Unusual or niche topics | Lower | Limited training data increases error risk |

One important nuance: AI tools that perform well on common question types can score interview performance less reliably when the question is unusual or highly domain-specific. The model simply has less training data to work from, which means its confidence intervals widen and more answers get flagged for manual review rather than automatic scoring.

Pro Tip: When answering any behavioral or situational question, walk through your decision process out loud. Say things like “I considered two options here” or “my concern at that point was…” This reasoning trail is very difficult for AI to fake and very easy for AI to recognize as authentic.

One area where AI tools for remote interview settings have improved dramatically is multimodal analysis. Some platforms now cross-reference your audio tone, response latency (how long you pause before answering), and eye movement patterns with your text content. A perfectly articulate, zero-hesitation response delivered without any natural pauses can actually score lower on authenticity than a slightly rougher answer that includes genuine thinking time.

Leading platforms: Benchmarks and performance compared

Understanding the technical aspects helps, but the real picture comes from seeing how leading platforms perform side by side and where their limits show up.

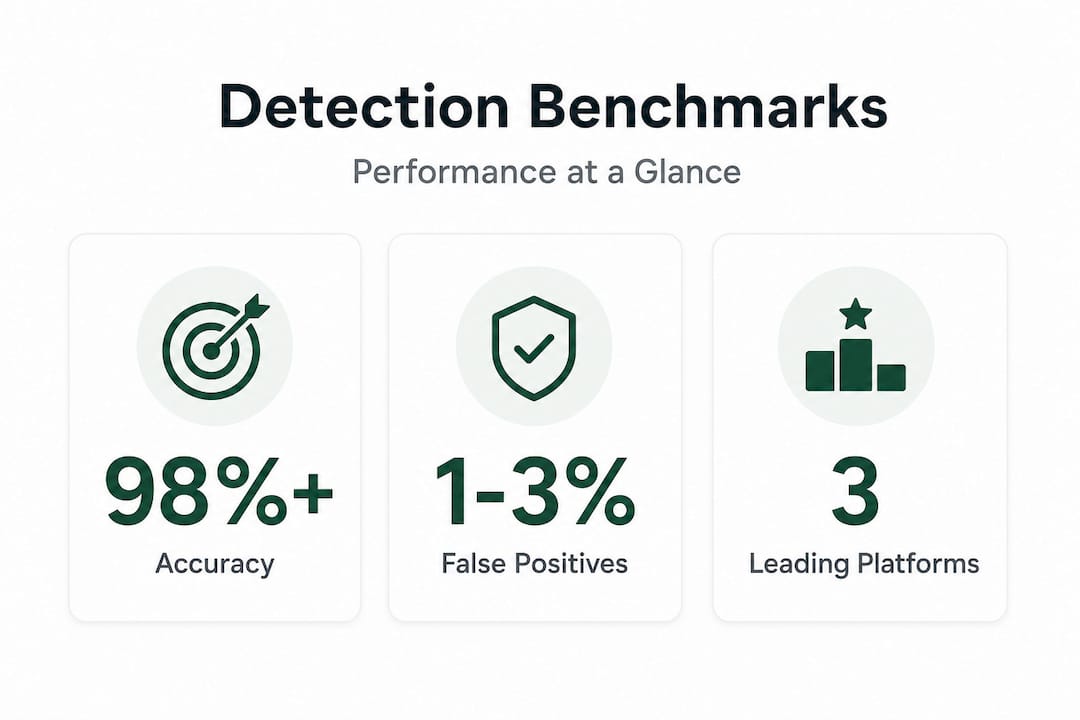

The data that does exist is worth examining carefully. Polygraf, one of the more documented platforms, claims 98%+ accuracy on AI cheating detection for named AI tools and 99.9% on transcription tasks. Fabric, another tool cited in interview-specific benchmarks, detects approximately 85% of cheating cases. General AI text detectors, which many smaller recruiting platforms rely on, sit in the 94–97% range on standard questions.

| Platform | Cheating detection rate | Transcription accuracy | False positive rate |

|---|---|---|---|

| Polygraf | 98%+ | 99.9% | Under 2% (claimed) |

| Fabric | 85% | Not specified | Not publicly available |

| General AI detectors | 94–97% | Varies by tool | 1–3% typical |

| Niche/domain-specific tools | Variable | Varies | Can reach 5–30% on unfamiliar topics |

A critical issue with these numbers is context. Most benchmarks were run under controlled conditions with standard question formats. According to Polygraf’s published data, general AI detectors can experience a 5 to 30 point drop in AUROC (a standard accuracy measure) when domain or question style shifts significantly. This is called “domain shift,” and it’s a real risk in specialized industries like biotech, law, or creative fields where question formats differ from the training data.

“Empirical gaps in public benchmarks highlight the need for transparency in vendor claims.” This quote from Detector Checker AI Benchmarks captures something important: many recruiters trust these tools without fully understanding where they break down.

The false positive concern is real but manageable. A 1–3% false positive rate sounds small until you consider that a recruiter reviewing fifty candidates might wrongly flag one or two genuine applicants. If that happens to be you, having a strong track record of explaining your reasoning becomes your best defense. It is worth noting that many platforms allow recruiters to set sensitivity thresholds, which means false positive rates can be adjusted up or down depending on the hiring team’s preferences.

Why do companies invest in these automated interview tools? Partly to save time and partly to reduce hiring bias. AI scoring removes some of the interviewer subjectivity that leads to inconsistent hiring decisions. The problem is that it introduces a new kind of bias: algorithmic bias, where candidates who naturally communicate in ways that differ from the training data (non-native speakers, people with certain cognitive styles, or candidates from industries with different communication norms) may score lower through no fault of their own.

Ways to approach interviews in the age of AI detection

Knowing the tools’ strengths and weaknesses, here’s how you can put that information into practice and stand out in AI-enabled interviews.

The single biggest mistake candidates make is trying to sound perfect. AI systems are trained on human responses, and humans aren’t perfect. When you deliver an answer that sounds flawlessly structured and covers every possible angle without hesitation, it actually raises a red flag. Authenticity, in AI terms, looks a little rough around the edges.

Here are the most effective ways to approach an AI-monitored interview:

- Use specific, real examples. Mention actual project names, team sizes, timeframes, and outcomes. Generic answers like “I worked on a team to solve a problem” score far lower than “I led a three-person team for six weeks trying to reduce support ticket volume by 20%.”

- Verbalize your trade-offs. When you say “I chose option A over option B because of X,” you are demonstrating reasoning that AI genuinely struggles to fabricate convincingly.

- Ask clarifying questions. Pausing to ask “When you say stakeholder management, do you mean internal teams or external clients?” signals genuine engagement and shows that you are processing the question rather than retrieving a stored answer.

- Embrace imperfect phrasing. Saying “let me think through that for a second” or “actually, I want to revise what I said” reads as authentic to both human interviewers and AI scoring systems.

- Prepare for edge cases. If you have practiced AI-driven question matching, make sure you aren’t just drilling perfect answers. Practice answering variations and unexpected follow-ups so your flexibility comes through naturally.

Pro Tip: Spend time practicing “why” questions rather than “what” questions. Why did you choose that approach? Why did you prioritize that outcome? Why did you disagree with your manager? These are the questions that reveal real thinking, and they are also the ones that most clearly distinguish human responses from AI-generated ones according to Detector Checker’s benchmarks.

One final thing to keep in mind: vendor accuracy claims don’t always reflect your industry. A platform tested on software engineering interviews may perform very differently in a creative, legal, or healthcare hiring context. Gaps in public test data are significant, and transparency from vendors about where their tools were trained is still limited.

What most job seekers get wrong about AI answer detection

Here is the uncomfortable truth that most articles on this topic won’t tell you: the candidates who spend the most energy trying to “beat” AI detection systems are usually the ones who perform worst overall.

There is a logical trap in the way job seekers think about this. They hear “AI is watching your answers” and immediately think about evasion tactics. How do I avoid triggering the detector? How do I sound human enough? This framing is completely backwards. The AI isn’t your audience. The recruiter is. The AI is just a filter, and filters let things through that match the criteria.

What AI detection has actually done is raise the bar for what “good” looks like in an interview. It has made hiring teams more interested in reasoning quality, adaptability, and genuine problem-solving. That’s not a trap; it’s an opportunity. The candidates who score highest on interview performance metrics are the ones who treat interviews as a genuine conversation about how they think.

The other misconception is that AI detection is binary. Many candidates think they either “pass” or “fail” the AI scan. In reality, most systems produce a confidence score that goes to a human reviewer. Even a flagged answer can be overridden if the recruiter finds it credible in context. Your job isn’t to score 100% on the AI. Your job is to give a recruiter a reason to advocate for you.

According to Detector Checker’s analysis, the most effective differentiator in AI-monitored interviews is reasoning depth, specifically the ability to discuss edge cases and trade-offs. Memorized answers can’t do that. Genuine experience, even imperfect experience, can.

The deepest insight here is that AI detection has inadvertently rewarded the thing good interviewers always valued: authenticity, intellectual honesty, and the willingness to say “I don’t know, but here’s how I’d figure it out.” If that’s your natural style, you have nothing to fear from these systems.

Enhance your interview readiness with Parakeet AI

With the facts and myths of AI answer detection clarified, the smartest next move is sharpening your own preparation so your genuine skills come through clearly in every answer.

Parakeet AI is a real-time interview assistant that listens to your interview as it happens and instantly provides tailored, relevant answers to every question. Whether you’re heading into a technical screen or a behavioral panel, Parakeet AI helps you respond with confidence and authenticity. Explore our collection of interview answer generator tools to discover research-backed strategies for standing out in AI-monitored hiring processes. The goal isn’t to game the system. It’s to show up fully prepared so your real strengths are impossible to miss.

Frequently asked questions

How reliable are AI interview answer detectors?

Leading tools like Polygraf and Fabric claim 85–99% accuracy in controlled benchmarks, but real-world performance can vary significantly depending on industry, question type, and how the platform was trained.

Can AI accurately tell if I used prepared answers?

AI tools are strong at catching memorized or generic answers, but adding real stories, specific numbers, and unique reasoning makes detection meaningfully harder for any automated system.

Should I worry about false positives during my interview?

False positive rates are typically 1–3% with top AI detectors, so the risk is low, but explaining your reasoning clearly throughout the interview gives you a natural buffer against any algorithmic misread.

What if the AI makes a mistake judging my answer?

AI detectors are 94–97% accurate on typical questions, but errors do happen, especially with unusual topics. A human recruiter usually reviews flagged responses, so your overall consistency and reasoning quality remain your best protection.